Audio-Visual and EEG-based Attention Modeling for Extraction of Affective Video Content

- Abstract

- Additional Comments

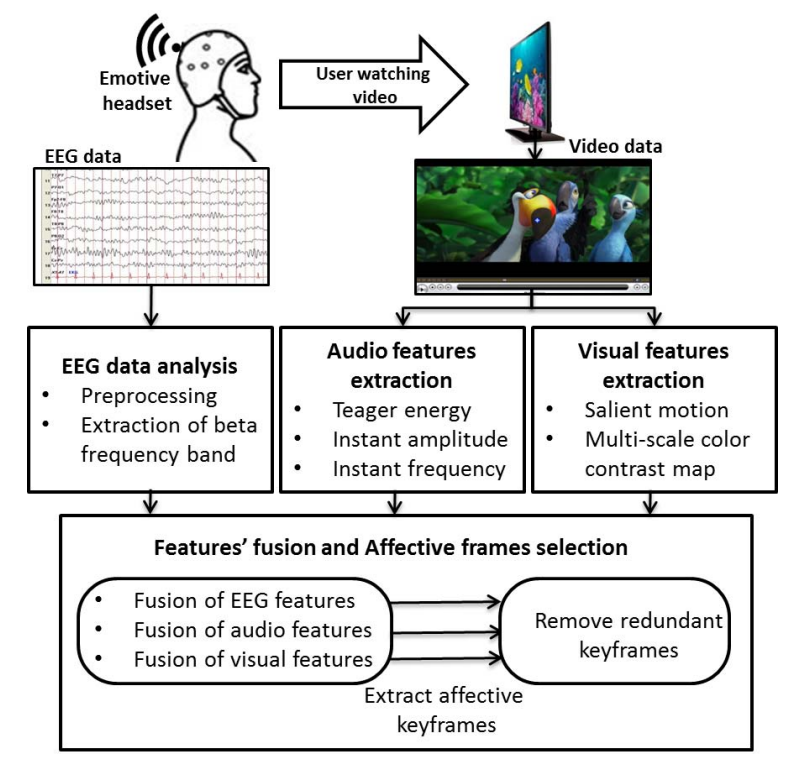

Video summarization aims to reduce redundancy and generate concise representations of video data. Extracting affective keyframes, which represent the emotional intensity and type of feelings evoked in viewers, has become an important approach in video summarization. Traditional methods rely on audio-visual features; however, these modalities are often insufficient to accurately capture human attention and semantic relevance. Video content induces strong neural responses that can be measured using electroencephalography (EEG) signals. This paper proposes an affective video content extraction framework that integrates EEG-based neural signals with audio-visual features to better model user attention and perception. The proposed approach bridges the gap between multimedia content and human cognitive responses, enabling the extraction of more relevant and personalized video summaries. Experimental results demonstrate that the method effectively reflects user preferences and improves the quality of affective video summarization.