Divide-and-Conquer Based Ensemble to Spot Emotions in Speech using MFCC and Random Forest

- Abstract

- Additional Comments

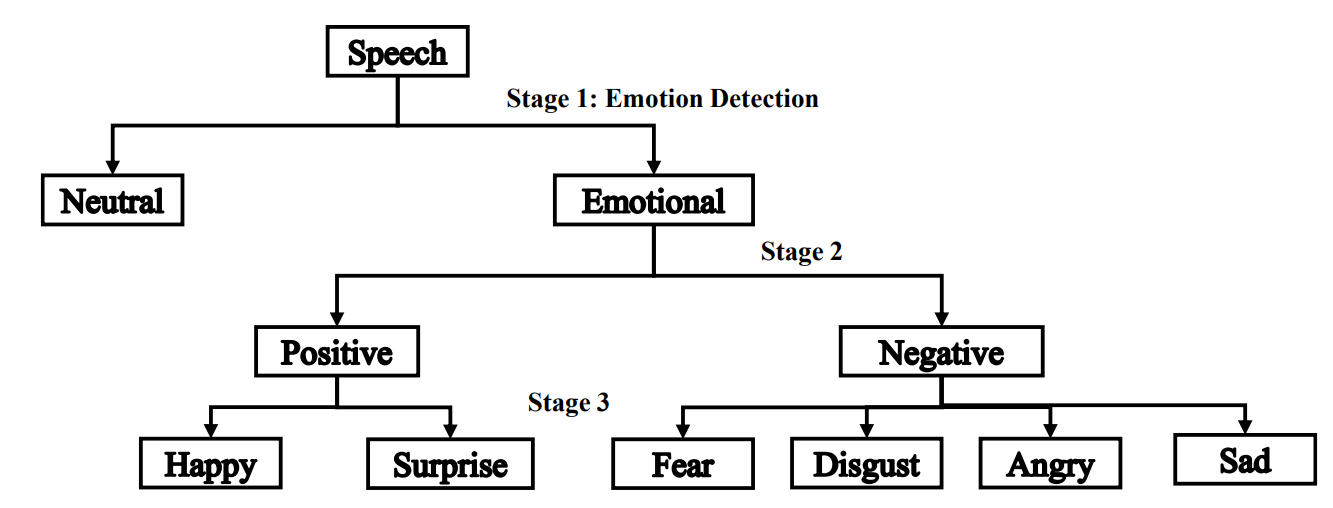

Speech signals convey not only linguistic information but also paralinguistic attributes such as gender, age, and emotional state, which are valuable for various speech analysis applications. This paper proposes a divide-and-conquer based ensemble classification framework for speech emotion recognition. The approach leverages the intrinsic hierarchical structure of emotions to construct an emotion tree, enabling the decomposition of the recognition task into smaller sub-problems. The framework operates in three stages: first, classifying speech as neutral or emotional; second, categorizing emotional speech into positive or negative classes; and finally, identifying specific emotions within these categories. The system utilizes Mel-Frequency Cepstral Coefficients (MFCC) features and Random Forest classifiers to perform the classification. Experimental results on benchmark datasets demonstrate that the proposed method achieves improved recognition performance compared to several existing approaches.