Speech Emotion Recognition from Spectrograms with Deep Convolutional Neural Network

- Abstract

- Additional Comments

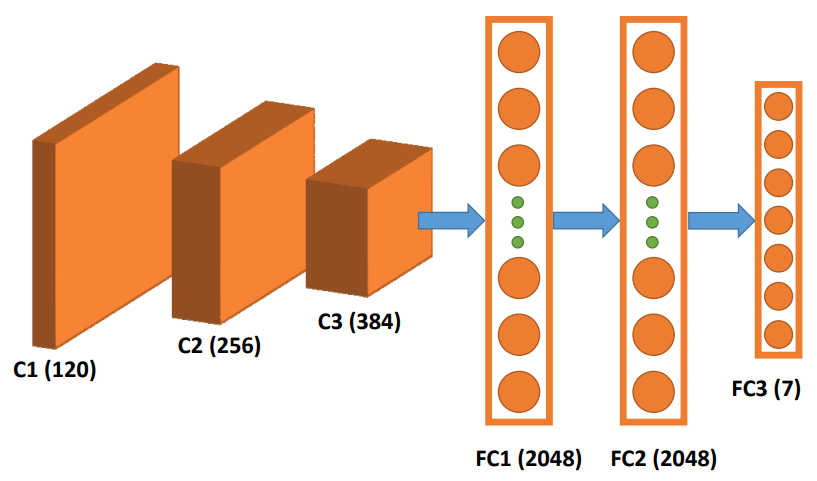

This paper presents a method for speech emotion recognition using spectrograms and a deep convolutional neural network (CNN). Spectrograms generated from speech signals are used as input to the CNN, which consists of three convolutional layers and three fully connected layers to extract discriminative features and predict seven emotion classes. The model is trained on spectrograms derived from the Berlin Emotional Speech Dataset. Additionally, the study explores the effectiveness of transfer learning by fine-tuning a pre-trained AlexNet model for emotion recognition. Experimental results indicate that the proposed model trained from scratch outperforms the fine-tuned model, demonstrating accurate and efficient emotion classification from speech signals.